Consuming APIs and Martial Arts of Databases

It’s been a while, since then quite a bunch has happened. Let’s start with the good stuff. Our latest Web Design task was to create something “cool”. This something cool had a few conditions, the first was that it had to make use of an API, the other condition was it had to consume data from an Endpoint.

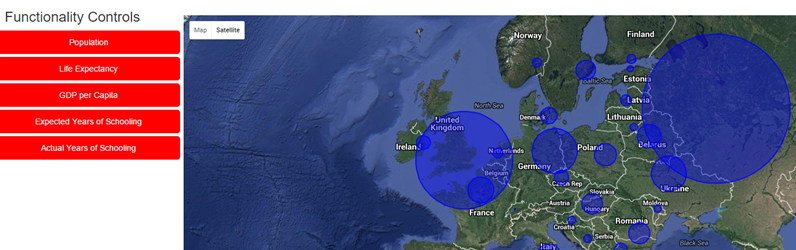

I decided early on I wanted a world map with overlays to display data. Conveniently one of the most versatile APIs out there is the Google Maps API. It allows presets, overlays and it can be reloaded independently of the page. I made the API the centre piece of the webpage and added a sidebar full of the various kinds of data I wanted to represent.

It’s all well and good saying; “I want to use a dataset that will give me the location of countries capital cities, the various demographics of said cities. Most of all, it has to be free and publicly available.” While there are many, many data sets that contain such information, finding a free one was like finding a needle in a haystack. Even if you’re using the world’s most powerful search engine to find that needle. After hours of searching I settled on a dataset that contained the coordinates for almost every world capital but was full of incorrect and null data for almost every demographic. My personal highlight was the description of Buenos Aires’ population being almost thirty million.

To remedy the issue, I went looking for alternate, correct, datasets. Right now I’d like to share the love on the UN Data service. You can search for almost any human geographical data over any year since the UN’s records began. Human Development Index, Population, Urban Area and Car Ownership were just some of the few niche datasets I was able to take advantage of.

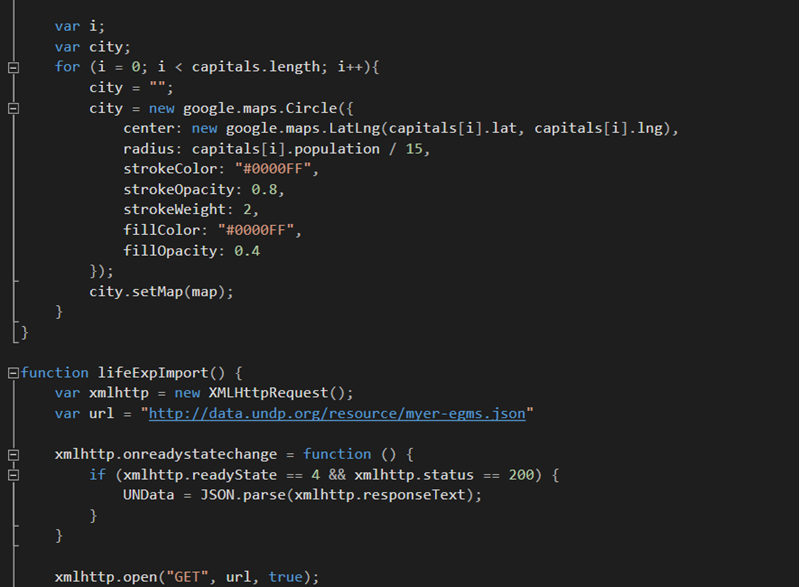

Actually implementing the data display was challenging. Each time a user would click an option the entire Google Maps API had to be reloaded. Then the particular Javascript function that loaded that API would have to retrieve the coordinates of every world city, then it would have to request the particular dataset that was selected by the user, then finally the Google Maps API would take the dataset for that city, apply a circular overlay onto the map at each city and finally use a calculation to weight the size of the overlay to form a visual scale that wasn’t too big or too small to mean anything.

Each piece of data I request was thankfully in the JSON format. With JSON the data is delivered in an array of objects, meaning that I could retrieve the matching country capital from several datasets by finding the country code, although this did involve an intersecting function that matched two character country codes to three character ones. From there it was grabbing the desired key and value of that object to use in the workings of the function.

Through several iterations I managed to cut down the load time for each dataset to be represented on the Google Maps API from twenty to about five seconds. Although the final result wasn’t incredibly stylised the webpage did its job well.

The hardest learning curve in the entire project was to learn the various parameters for data calls that each dataset used. Some of them had to be called in two parts due to limitations and others required bounding box measurements to correctly call in information from the entire planet’s worth of data for that parameter.

In summary, the final result perhaps wasn’t the coolest; it was more interesting to see the kind of framework that goes into all manner of data displays and Google Maps APIs across the internet up close.

Since then myself and Joe have moved onto beginning to learn Database Design and Management, there are new ways of looking at data that I’d never considered. With hours of studying done so far the way of the database is not quite as mysterious as it was at our introduction to the concept.

We’ve been quick to pick some good practices and with assurances from Tony that we will eventually; “Just get it”, we’ve been pressing forward. Certain circumstances have disrupted our learning as of late but I’ve been studying more on the subject nonetheless.